✍️ By Ismael Kherroubi Garcia.

Ismael is Founder & Co-lead of the Responsible Artificial Intelligence Network (RAIN), and Founder & CEO of Kairoi.

📌 Editor’s Note: This article is part of our Tech Futures series, a collaboration between the Montreal AI Ethics Institute (MAIEI) and the Responsible Artificial Intelligence Network (RAIN). The series challenges mainstream AI narratives, proposing that rigorous research and science are better sources of information about AI than industry leaders. This sixth installment of Tech Futures by RAIN tackles the tension between the usefulness of AI in science and the undermining of knowledge systems through the misuse of mainstream AI tools.

AI For Knowledge

How we understand the world depends on how we interpret data about it. In theory, the more data we have about a subject, the better we will understand what is going on. In the 1960s, Margaret Dayhoff, a doctor of philosophy in quantum chemistry, was working on protein sequencing, which is about studying the order of amino acids within proteins. Comparing protein sequences could be critical, as Dayhoff and her co-authors explain in the Atlas of Protein Sequence and Structure (1965):

“Conspicuous in comparative human protein sequences is information of great medical-diagnostic value. A long series of abnormalities has been found to be attributable to single amino acid replacements. One such tragically serious disease is sickle-cell anemia.”

Protein sequencing thus provides a crucial trove of data for the advancement of health sciences. But it’s a lot of data. To make things easier, Dayhoff et al.’s Atlas compiled protein sequences formatted for computational analysis:

“The information is kept in a compact, uniform format on punched cards. New information and corrections are easily inserted, while the text is kept accurate.”

How we store and analyse data with computers has evolved a bit since the 1960s, but Dayhoff’s work served as the foundation of bioinformatics, where computational techniques are applied to life sciences.

Fast-forward a decade or so, and artificial intelligence (AI) was being applied in medical settings. What we now call good old-fashioned AI (GOFAI) was applied through computer-assisted diagnostic tools such as 1971’s Internist-I. Meanwhile, early machine learning (ML) techniques were being tested for analysing X-rays. By 2006, researchers were able to identify ML use cases across the studies of evolution, genomics, proteomics, and systems biology. Fast-forward again to 2021, and Google DeepMind overcame the problem of determining the 3D structure of proteins using AI techniques.

There is no doubt that AI technologies and practices are valuable for the advancement of our understanding of the world around us. But might it be possible that AI can also undermine human knowledge? Wikipedia serves as the world’s largest online encyclopedia, and AI is unfortunately becoming a problem.

AI Against Knowledge

In an interview published January 12th, the founder of Wikipedia, Jimmy Wales, explained: “We don’t ban the use of AI. We do say, ‘be very careful with it,’ and ‘you’re responsible for what you put in Wikipedia.’” Just a couple of months later, on March 20th, “volunteer editors for Wikipedia’s English-language platform formally voted to ban all AI-generated text from its 7.1 million articles.”

What the volunteer editors specifically voted on was a new policy on large language models (LLMs) that states, as of April 11th, 13:06 (UTC):

“The use of LLMs to generate or rewrite article content is prohibited, save for these two exceptions:

1. Editors are permitted to use LLMs to suggest basic copyedits to their own writing, and to incorporate some of them after human review, provided the LLM does not introduce content of its own. Caution is required because LLMs can go beyond what is asked of them and can change the meaning of the text such that it is not supported by the sources cited.

2. Editors are permitted to use LLMs to translate articles from another language’s Wikipedia into the English Wikipedia, but must follow the guidance laid out at Wikipedia: LLM-assisted translation.”

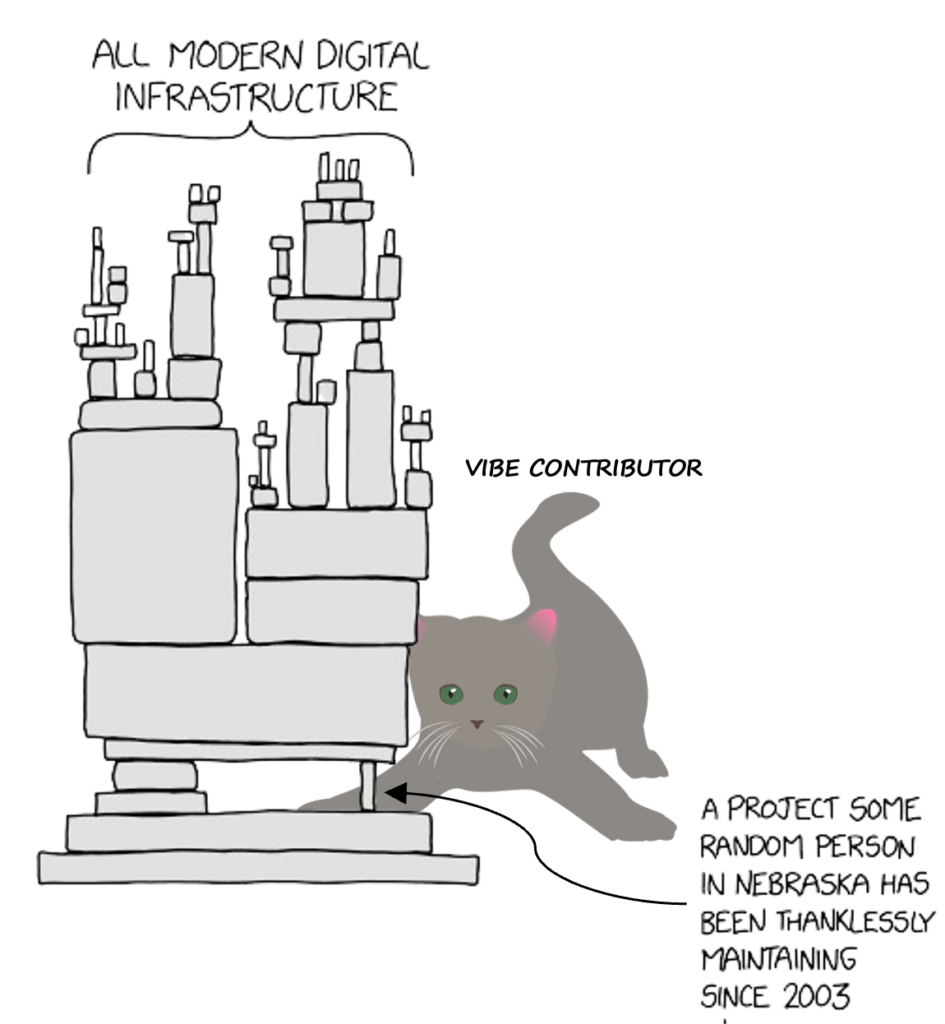

What exactly is driving this almost-outright ban of LLMs in English Wikipedia? A few weeks ago, we discussed the threat AI-generated code poses to the world’s digital infrastructure, as low-quality contributions are inundating maintainers’ inboxes. The proliferation of LLM-based chatbots has meant something similar for Wikipedia: volunteer editors are having to spend disproportionate amounts of time handling AI-generated content. In late 2023, the “AI Cleanup” was launched “to combat the increasing problem of poorly written AI-generated content on Wikipedia.” Some of what editors of AI-generated content have had to contend with are fake citations that are difficult to detect, AI-generated images that look like paintings appropriate to the periods they refer to, and even entire articles about fortresses that never existed.

Caption: © 2026 Responsible Artificial Intelligence Network (RAIN) and Ismael Kherroubi Garcia, CC BY 4.0, adapted from xkcd.com (Dependency) and Ricinator on Pixabay

The way Wikipedia editors are experiencing AI seems to be in tension with what scientists have found in AI for decades. But it is important to note that commercial LLMs, as we currently know them, are a far cry from the sorts of AI and ML techniques deployed across the life sciences since the 1970s. AI is not prima facie problematic for knowledge systems. Science will benefit from improving and implementing different forms of AI for years to come. However, the wide availability of LLM-based chatbots makes it far easier for individuals to generate low-quality content at scale and, intentionally or unintentionally, apply pressure to some of the most important systems for human knowledge, including Wikipedia.

Image credit: Fabrizio Matarese / Better Images of AI / CC BY 4.0