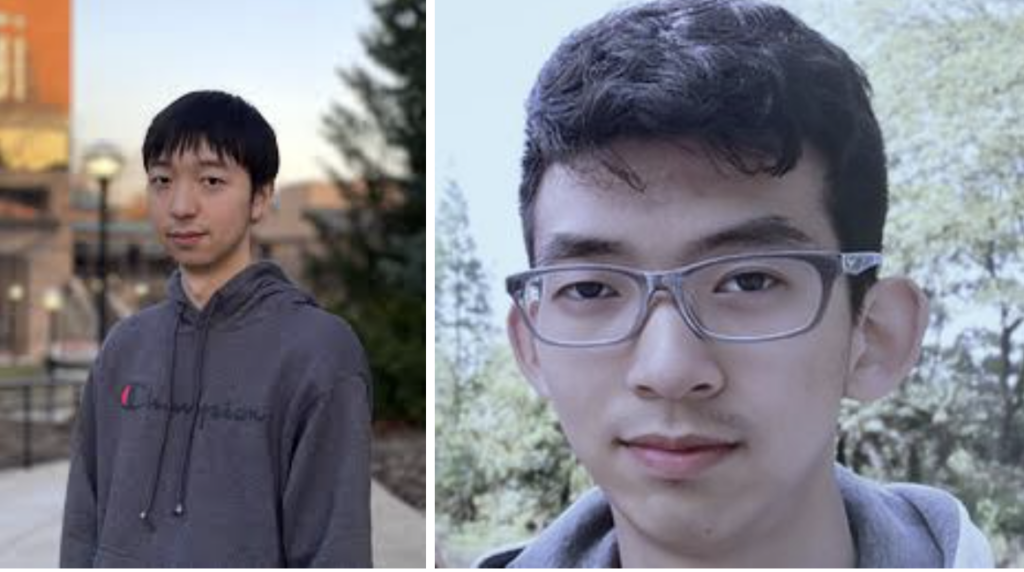

🔬 Research Summary by Gaotang Li and Jiarui Liu.

Gaotang Li is a senior undergraduate student studying Computer Science and Mathematics at the University of Michigan.

Jiarui Liu is a first-year Master’s student in Intelligent Information Systems at the Language Technologies Institute at Carnegie Mellon University.

[Original paper by Gaotang Li, Jiarui Liu, and Wei Hu]

Overview: This paper introduces “Bam,” a novel training algorithm for neural networks that addresses the issue of low accuracy on rare subgroups, a common problem in standard training methods. Bam operates in two stages: firstly, by amplifying bias through auxiliary variables, and secondly, by reweighting the training dataset based on these amplified biases. This approach improves accuracy for underrepresented groups and offers a new method for training neural networks that minimizes the need for costly group annotations.

Introduction

Imagine this: You’re using an AI image classifier to sort your vacation photos, but it keeps mistaking the background for the main subject. Frustrating, right? This is due to what’s known as ‘spurious correlations’ in machine learning, where models make decisions based on irrelevant features. It’s a widespread issue, affecting everything from image recognition to natural language processing and reinforcement learning.

Our research tackles this challenge by focusing on group robustness, aiming to enhance accuracy for the worst-off groups in a dataset. These are the groups where the model’s reliance on irrelevant attributes is most misleading. Traditional methods to improve this accuracy involve a costly process of annotating every training example with group information, which is often impractical. We propose a different approach: Bam. Bam amplifies the biases in an initial model to better guide the training of a subsequent, more balanced model. This novel method promises to enhance group robustness without the extensive need for group annotations in training data, a significant step forward in making AI more reliable and fair.

Key Insights

Unveiling “Bam”: A New Solution to Spurious Correlation

The Challenge: Improving Group Robustness

The key to solving this problem lies in enhancing the model performance on group robustness, which means improving its accuracy for the worst-off groups in the dataset. The model’s reliance on irrelevant features is most misleading in these groups. Traditional methods to enhance this accuracy involve annotating every training example with group information, which is often impractical and expensive.

Introducing Bam: A Novel Two-Stage Approach

We propose “Bam” – a novel, two-stage training algorithm to address these challenges. Bam aims to improve group robustness without requiring extensive group annotations in training data. How does it work? Let’s break it down:

Stage One: Bias Amplification

In the first stage, Bam amplifies the inherent biases in the initial training model. This is achieved by introducing trainable auxiliary variables for each training sample. These variables exaggerate the model’s biases, making them more prominent and easier to identify.

Stage Two: Rebalanced Training

In the second stage, we take the outputs from our bias-amplified model and use them to resample our training dataset. This means giving more importance to the misclassified samples due to amplified biases. The model then continues training on this adjusted dataset, gradually learning to focus on the right features and ignore the misleading ones.

The Results: Improved Accuracy and Reduced Need for Annotations

What makes Bam stand out is its ability to improve the worst-off group’s accuracy without relying heavily on group annotations. Our research shows that Bam can achieve competitive performance compared to existing methods in both computer vision and natural language processing applications. Additionally, Bam introduces a simple stopping criterion based on the minimum class accuracy difference, eliminating the need for group annotations with little or no loss in worst-group accuracy.

Empirical Results and Analysis

Our empirical tests of Bam demonstrate its effectiveness in improving group robustness. Evaluated on various standard benchmark datasets for spurious correlations, Bam achieved competitive worst-group accuracy compared to existing methods. Notably, Bam performs robustly across several hyperparameter choices and dataset characteristics.

Additionally, one of the aspects of Bam is its use of class accuracy difference as a stopping criterion, named “ClassDiff.” This approach allows us to potentially eliminate the need for group annotations with little or no loss in worst-group accuracy. The criterion is based on the observation that a low class accuracy difference is strongly correlated with high worst-group accuracy.

Between the lines

As introduced in this research, Bam represents a significant advancement in tackling spurious correlations in deep learning, specifically focusing on improving the worst-group accuracy across various NLP and CV benchmarks. Its innovative approach uses a bias amplification scheme and an auxiliary variable dubbed ‘ClassDiff’ and has shown effectiveness under various experimental settings.

A theoretical analysis of the bias amplification scheme could provide deeper insights into the mechanisms of how deep learning models develop and rely on spurious correlations. Such an analysis would not only enhance our understanding of the behavior of models but could also guide the development of more robust and fair deep learning systems.